When Google launched Nano Banana 2 on February 26, 2026, the AI image generation landscape gained something production teams needed: a model optimizing for throughput without sacrificing professional output quality.

The narrative around AI image generators has centered on artistic quality - Midjourney's atmospheric renders, DALL-E 3's prompt adherence, and Stable Diffusion's customization potential. Nano Banana 2 addresses a different constraint: generation speed as a production bottleneck.

The Architecture Foundation

Nano Banana 2 is described by Google as Gemini 3.1 Flash Image in Workspace rollout notes. Public documentation positions it as a Gemini-family multimodal model optimized for fast image generation and editing workflows.

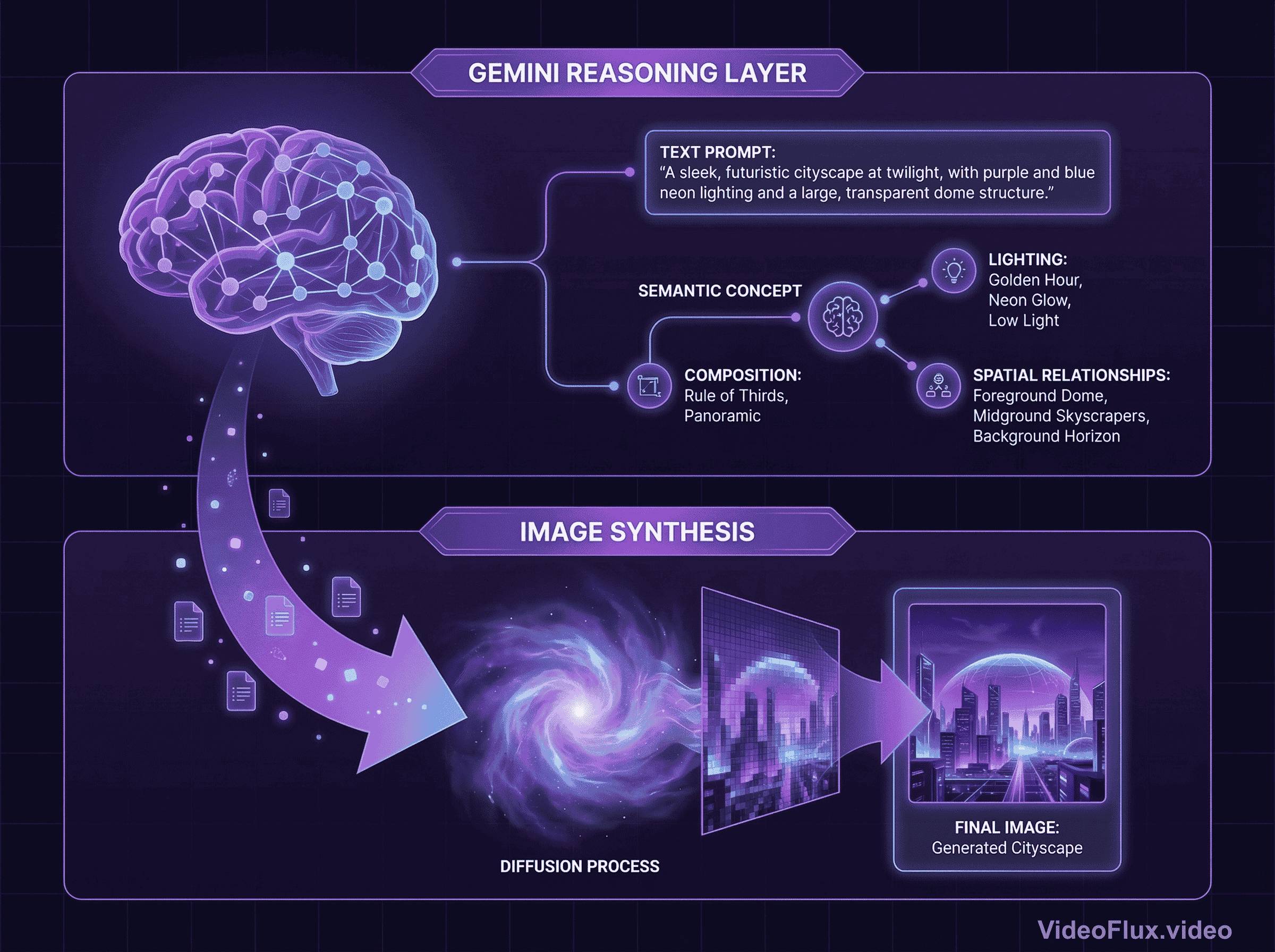

Traditional image generators process prompts as weighted token sequences, decomposing text into statistical patterns before sampling from noise to generate outputs. Nano Banana 2's architecture processes prompts through Gemini's reasoning layer first, interpreting composition, lighting, and spatial relationships as semantic concepts before image synthesis begins.

Figure 1: Reasoning-first generation architecture

Figure 1: Reasoning-first generation architecture

Google's official messaging emphasizes Flash-speed generation with professional-oriented controls, but does not publish a single universal benchmark protocol covering all platforms and prompts.

Evidence Snapshot (Official Sources)

The following points are directly grounded in first-party documentation:

| Evidence item | What is verifiable | Primary source |

|---|---|---|

| Launch timing | Google announced Nano Banana 2 on February 26, 2026. | Google Blog |

| Product positioning | Nano Banana 2 is framed as fast image generation/editing in Gemini surfaces. | Google Workspace Updates 2026 |

| Model family context | Gemini Flash Image is positioned for speed-oriented generation workflows. | Google Developers Blog |

| Enterprise/API deployment path | Gemini Flash Image is documented for Vertex AI usage. | Google Cloud Docs |

| Pricing authority | API pricing should be taken from official Google pricing pages. | Gemini API Pricing |

This article treats third-party benchmarks as directional context and treats first-party product docs as the primary evidence layer.

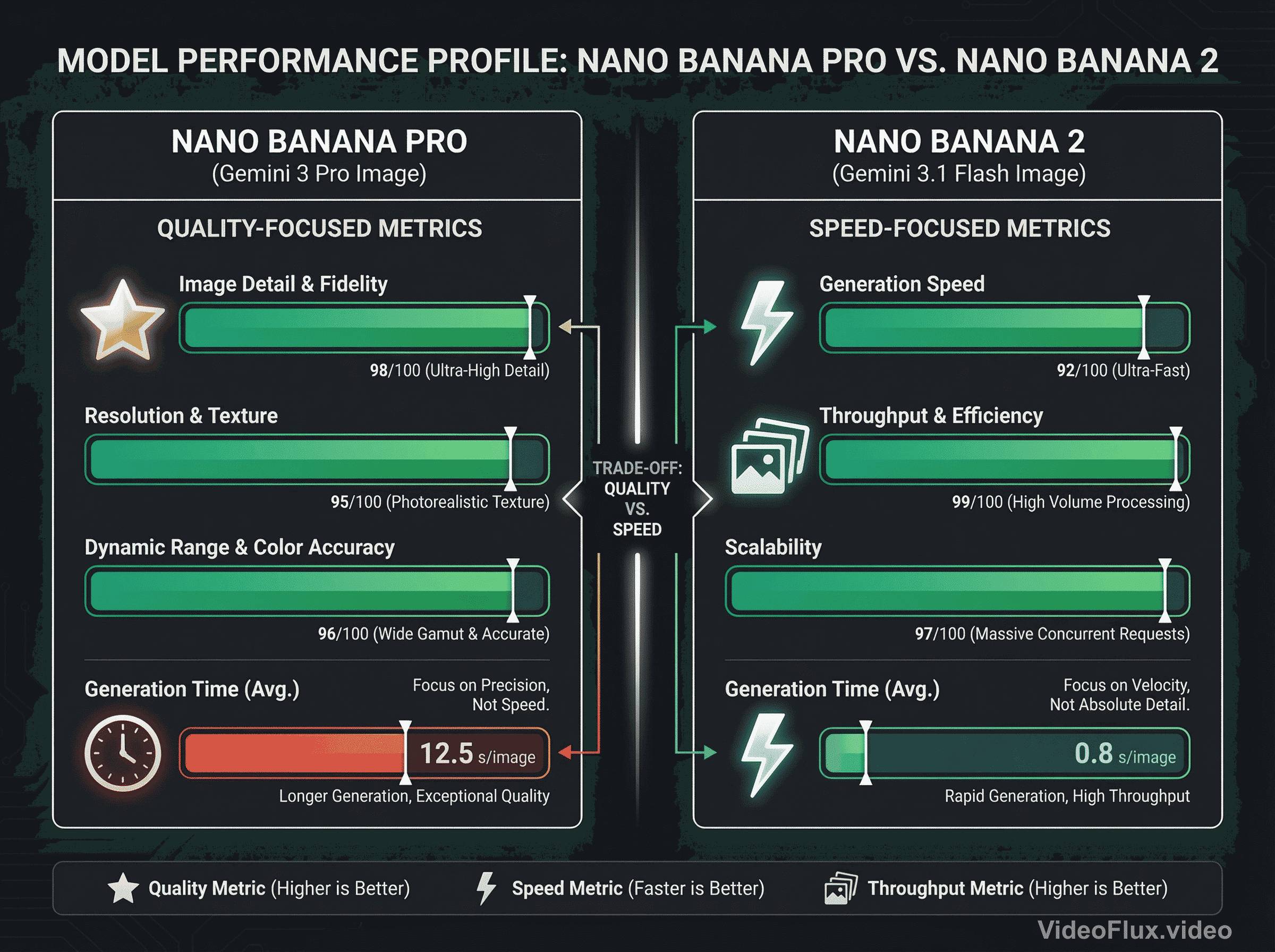

The Speed vs Quality Spectrum: Flash vs Pro

Google's Nano Banana lineup presents distinct production tradeoffs:

Nano Banana Pro (Gemini 3 Pro Image): positioned for higher-depth generation and editing in Google's product messaging.

Nano Banana 2 (Gemini 3.1 Flash Image): positioned for faster generation and iteration in production workflows.

Figure 2: Generation time characteristics across Nano Banana models

Figure 2: Generation time characteristics across Nano Banana models

Throughput differences become operationally significant at scale. In production, teams should benchmark their own prompt sets and queue conditions rather than rely on single-source latency claims.

For text-heavy assets, Google highlights precision text rendering and translation support for Nano Banana 2 in Workspace documentation.

Text Rendering Accuracy

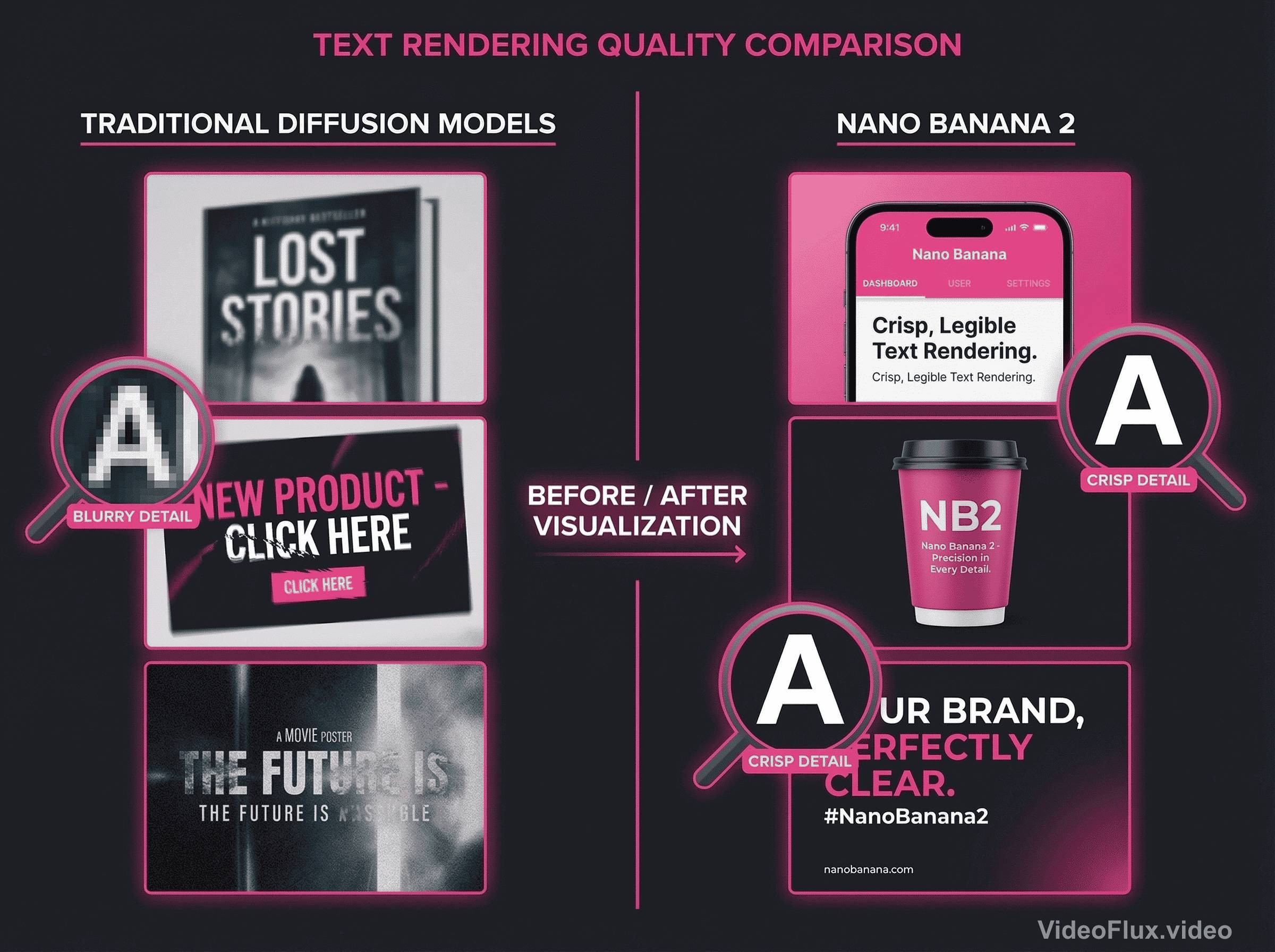

The AI image generation discourse focuses heavily on photorealism and artistic style. Text rendering receives less attention despite representing a fundamental production requirement for marketing materials, product mockups, social media content, and UI designs.

Google's public materials describe Nano Banana 2 as improved for legible text rendering and localization/translation workflows. In production workflows, better text rendering usually reduces iteration cycles for branded visuals, UI mockups, and ad creatives.

Figure 3: Text rendering accuracy comparison

Figure 3: Text rendering accuracy comparison

Traditional diffusion models face challenges with text rendering, as character formation requires precise spatial consistency. Nano Banana 2's reasoning-first architecture processes text as structured information before generation, contributing to improved accuracy.

Google Image Search Grounding

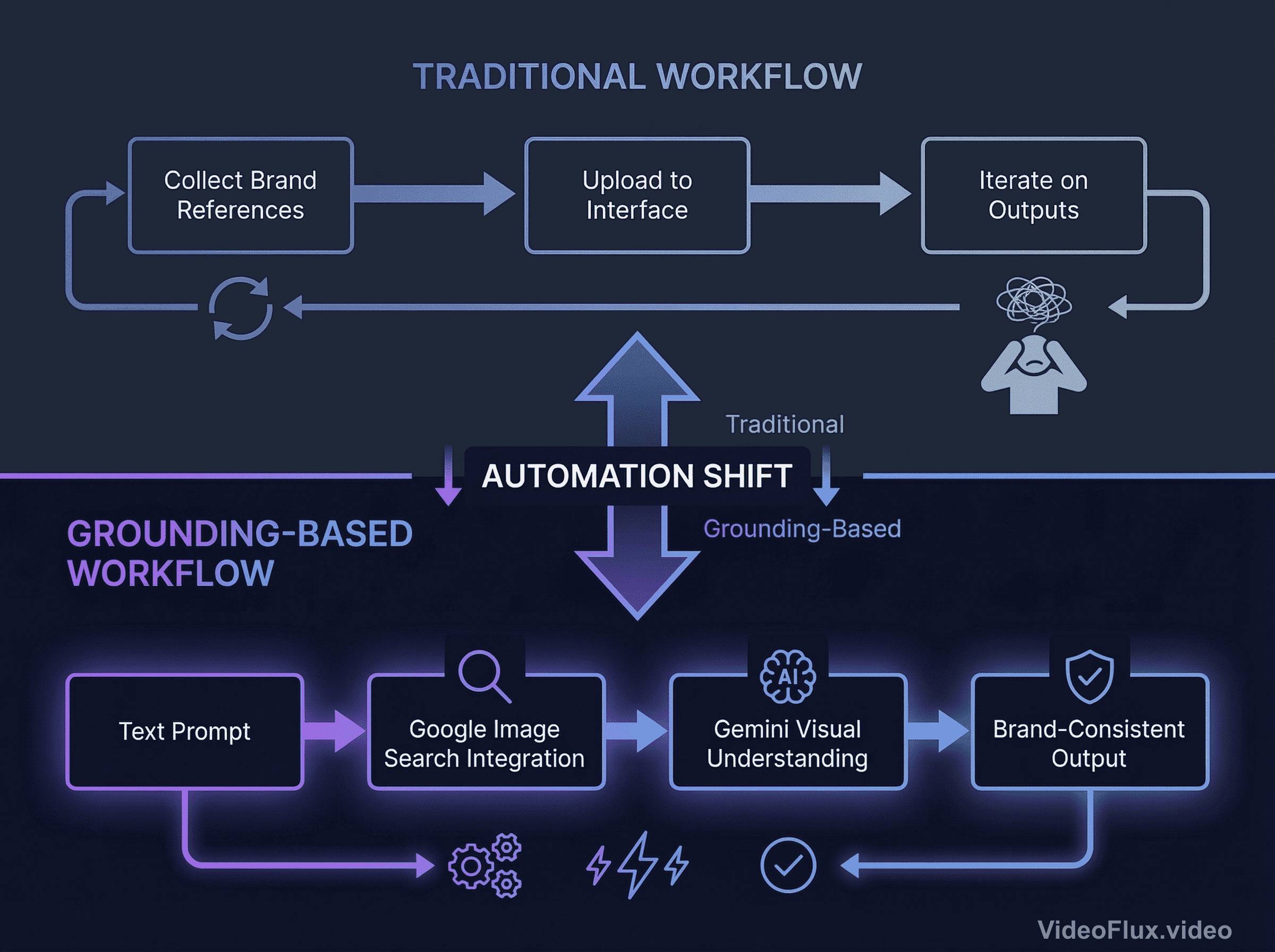

Workspace release notes describe Nano Banana 2 as using Gemini world knowledge and web search context for subject rendering in supported surfaces.

This addresses brand consistency across generated assets without manual reference collection. Traditional workflows require manually collecting brand visual references, uploading them to the generation interface, and iterating when outputs drift from brand guidelines.

When grounded generation is available in a given product surface, users can get stronger subject context without fully manual reference collection.

Figure 4: Workflow comparison with automatic visual grounding

Figure 4: Workflow comparison with automatic visual grounding

The technical implementation leverages Gemini's multimodal architecture. When activated, the system queries Google Image Search, processes retrieved images through the visual understanding pathway, and conditions generation on that visual context alongside text prompts.

Use-case fit should still be validated with internal prompts and review criteria, especially for regulated or brand-sensitive content.

Real-World Performance Comparison

Public sources suggest distinct positioning across generation speed, quality characteristics, and cost:

Speed metrics:

- Nano Banana 2: positioned around speed, instruction following, and text handling

- Alternative models: often differentiated by style control, customization, or editing workflow

Cost structure:

- Nano Banana 2: check current Gemini API / Vertex AI pricing pages for official rates

- DALL-E / Midjourney / Flux: pricing depends on vendor plans and access tier

Nano Banana 2 SEO FAQ

What is Nano Banana 2 in Gemini?

Nano Banana 2 is Google's fast image-generation model family positioning in Gemini surfaces, commonly described as Gemini Flash Image in rollout materials.

Is Nano Banana 2 good for text rendering?

Google positions Nano Banana 2 around strong text rendering and translation-friendly generation workflows for production assets.

Nano Banana 2 vs Midjourney: which is better?

A practical answer depends on workload: teams often prefer Nano Banana 2 for speed and iteration, and Midjourney for style-heavy artistic exploration.

Where can I check Nano Banana 2 pricing?

Use official Google pricing pages (Gemini API and Vertex AI docs) because pricing and packaging can change over time.

Production Deployment Considerations

The speed advantage creates deployment opportunities where throughput constraints previously limited workflows. Common application scenarios include:

High-volume production: Platforms generating hundreds of images benefit from throughput improvements. Reducing per-image generation time enables increased output within existing infrastructure.

Interactive workflows: Creative teams generating multiple concept variations gain responsiveness when individual generations complete in 3-6 seconds rather than 20-30 seconds.

Automated pipelines: Marketing systems integrating AI image generation face latency constraints. Faster generation enables workflows like personalized imagery generation, dynamic social content, and region-specific variations.

Response-critical applications: Use cases requiring sub-10-second generation latencies - like AI-assisted design tools and interactive platforms - become architecturally viable with Nano Banana 2's speed profile.

Trade-offs and Limitations

Nano Banana 2 doesn't dominate every metric:

Artistic quality: model preference depends on task and visual style goals; this post does not claim a universal winner.

Customization flexibility: Stable Diffusion variants offer extensive customization through LoRAs, fine-tuning, and model merging. Teams requiring specialized visual styles benefit from this flexibility.

Cost predictability: Subscription-based models can simplify budgeting; API billing can be better for programmatic scaling.

Prompt complexity: DALL-E 3's conversational interface reduces technical barriers for non-specialist users. Nano Banana 2's performance benefits from structured prompting.

Market Positioning

The AI image generation market has matured beyond single-dimension quality optimization. Production workflows introduce constraints - throughput requirements, budget limitations, and integration complexity - that pure quality metrics do not capture.

Nano Banana 2 optimizes for teams generating substantial volumes of professional-quality imagery where text accuracy, brand consistency, and generation speed determine operational viability. This represents a market segment requiring different optimization than purely artistic or purely speed-focused alternatives.

The architectural foundation - reasoning-first generation built on Gemini's multimodal capabilities - suggests evolutionary development paths. As Google's Gemini models evolve, improvements in reasoning quality and multimodal understanding flow to Nano Banana performance.

Deployment Decision Framework

Evidence suggests deployment criteria:

Deploy Nano Banana 2 when:

- Generating hundreds or thousands of images weekly

- Text rendering accuracy critically impacts asset usability

- Brand consistency requirements across generated content

- Throughput constraints limit iteration potential

- Integration with Google Cloud infrastructure

Alternative models when:

- Pure artistic quality exceeds throughput importance (Midjourney)

- Extensive customization and model fine-tuning required (Stable Diffusion)

- Subscription pricing provides cost advantages (Midjourney)

- Conversational editing workflows fit team capabilities (DALL-E 3)

Multi-model strategies often prove optimal for mixed workflows. Different models serve different production needs: high-volume brand-consistent production, creative exploration, specialized visual styles.

Broader Implications

Nano Banana 2's positioning reflects maturation across AI generation tools: specialized optimization for distinct workflow requirements rather than general-purpose quality competition.

This specialization benefits the ecosystem. Models can optimize for speed, artistic depth, customization, or integration simplicity. Teams can choose based on deployment needs rather than abstract quality claims.

As AI image generation transitions from experimental to production infrastructure, specialized tools become essential. Nano Banana 2 demonstrates market demand for pro-quality output at flash-tier speed - a combination addressing specific production constraints.

Sources: