MaskingTape, PackingTape, and GafferTape are the newest anonymous model names drawing attention in the AI image generation community. As of April 4, 2026, the important fact is simple: these names are being discussed as anonymous image models appearing in Arena-style blind testing, but there is no official public confirmation of ownership, architecture, or final product branding.

That distinction matters. Anonymous test codenames create noise fast. A responsible analysis should separate three things:

- What is publicly visible

- What the community is inferring

- What production teams can actually act on today

This post focuses on that boundary.

What Is Actually Verifiable Right Now?

At the moment, the defensible public picture is limited but clear enough to describe:

maskingtape-alpha,packingtape-alpha, andgaffertape-alphaare being discussed as anonymous image-generation models in Arena-style comparison environments- Community attention around them centers on strong world knowledge and better-than-expected text rendering

- There is no official OpenAI announcement confirming that these models belong to OpenAI

- There is also no public technical paper, system card, API documentation, or benchmark release for these tape-named models

That means any article claiming exact architecture, pricing, parameter count, or release plan is moving beyond public evidence.

Figure 1: Verified evidence should be separated from community speculation

Figure 1: Verified evidence should be separated from community speculation

Why These Anonymous Models Are Getting So Much Attention

The reason is not mystery for its own sake. It is pattern recognition.

The AI model ecosystem has already seen anonymous or semi-anonymous models gain traction in public preference arenas before formal launch. When a model appears under a temporary codename and performs unusually well on prompts users care about, the market starts reverse-engineering its likely origin.

That is what is happening here.

Public discussion has focused on two possible signals:

1. Strong world knowledge in image outputs

Users have highlighted prompts that depend on broad cultural or contextual understanding rather than pure visual style. If a model handles those prompts unusually well, people naturally suspect a company with strong multimodal language-model infrastructure behind it.

2. Strong text rendering

Text rendering remains one of the most commercially meaningful image-gen capabilities. Better text handling is useful for ads, social graphics, UI mockups, product imagery, menus, posters, packaging concepts, and presentation visuals. If an anonymous model appears unusually capable here, that gets immediate attention from both hobbyists and production users.

Neither signal proves ownership. But both explain the level of interest.

Are MaskingTape, PackingTape, and GafferTape OpenAI Models?

The careful answer is: possibly, but not confirmed.

Community discussion has connected these tape-named models to OpenAI because:

- the naming pattern feels like a temporary internal or testing codename

- the reported strengths line up with areas where frontier multimodal systems have been improving

- the models appeared in a context where anonymous testing is already a known pre-launch pattern

That is still inference, not confirmation.

For a blog intended to age well, the safest framing is:

These models are best understood as anonymous image-generation contenders that the community has speculatively linked to OpenAI, without official confirmation as of April 4, 2026.

That sentence is much harder to argue with than "these are definitely GPT-Image-2."

Why There Is Not Enough Benchmark Data for a Serious Ranking

This is the most important practical point.

Right now, there is not enough public evidence to make a serious claim like:

- MaskingTape is better than Nano Banana Pro

- PackingTape beats GPT Image 1.5

- GafferTape is the best text-rendering image model

Why not?

Because defensible model evaluation needs more than viral screenshots. At minimum, teams would want:

- a fixed prompt set

- repeated runs

- category-based scoring

- known generation settings

- enough sample volume to reduce one-off variance

- stable leaderboard visibility over time

Without that, the discussion remains interesting but preliminary.

This is also why production teams should resist overreacting to social posts. Anonymous arena momentum is useful as an early signal, but it is not the same thing as a validated deployment recommendation.

What These Models Suggest About the 2026 Image Generation Race

Even without full benchmarks, the tape-model conversation points to something real about the market.

The next competitive layer in image generation is not only raw aesthetics. It is increasingly about a bundle of commercially useful capabilities:

- text rendering that survives real design use

- stronger world knowledge for culturally specific prompts

- better instruction following

- more reliable image editing and compositional control

- outputs that need fewer repair passes in downstream workflows

That shift matters because image generation is moving from novelty toward production infrastructure.

For consumer virality, pretty images are enough. For teams, they are not.

Teams care about whether a model can produce:

- a believable product mockup with usable text

- a campaign visual that reflects recognizable real-world context

- a social creative that does not collapse under detailed instructions

- an edit workflow that preserves structure instead of hallucinating away the original intent

If the tape-named models are getting noticed for world knowledge and text handling, that fits the direction the market is already moving.

A Better Way to Think About MaskingTape, PackingTape, and GafferTape

Instead of treating them as a solved leaderboard event, it is more useful to treat them as a market signal.

That signal is:

Anonymous testing still matters.

Blind comparison environments can surface model quality before branding and launch strategy catch up.

Text rendering is now a frontline feature, not a side metric.

If a model is noticeably better at text, users detect it quickly because the business value is obvious.

World knowledge is becoming visible inside image generation.

When models understand objects, public figures, environments, interfaces, and cultural context more reliably, the gap is noticeable even in casual community testing.

The market increasingly rewards deployable output, not just aesthetic flair.

Models that reduce manual cleanup can become disproportionately important for creators, agencies, and software platforms.

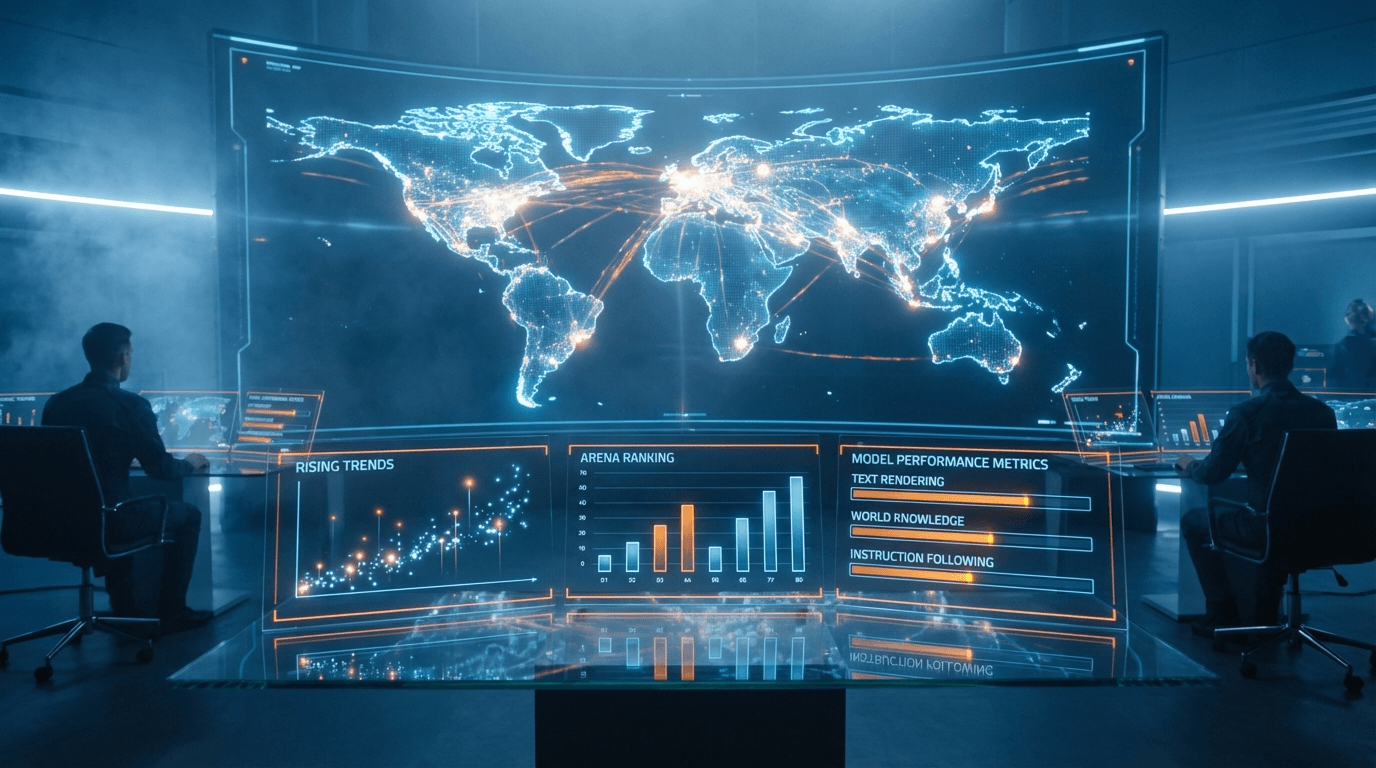

Figure 2: Anonymous testing can signal where the image market is moving

Figure 2: Anonymous testing can signal where the image market is moving

Should Creators or Teams Switch Based on These Models Yet?

Not yet, at least not purely on public chatter.

A practical framework is:

Pay attention now if:

- you actively compare frontier image models for production workflows

- text rendering quality affects your business

- your team benefits from early model scouting

- your workflow already uses multiple model providers

Wait for more evidence if:

- you need pricing clarity

- you need API stability and terms

- you need brand-safety documentation or system cards

- you need repeatable benchmark results before changing workflows

For most teams, the correct move is watchful skepticism. Track the models, but do not redesign your stack around anonymous codenames.

Comparison Context: What We Can Say Safely

Public leaderboard context from Artificial Analysis shows how competitive the current image market already is. As of early April 2026, GPT Image 1.5 and Nano Banana family models are among the most visible first-party contenders on public image leaderboards.

That context matters because any new anonymous breakout model would have to outperform an already strong field, not an empty market.

The safest summary is not that the tape models have already won. It is that they have generated enough community interest to be worth watching in a market that now cares deeply about:

- text and typography

- photorealistic prompt understanding

- editing quality

- commercial design usefulness

Figure 3: The practical move is to monitor signal quality, not rush deployment decisions

Figure 3: The practical move is to monitor signal quality, not rush deployment decisions

Bottom Line

MaskingTape, PackingTape, and GafferTape are worth covering, but only with disciplined framing.

The non-controversial version is this:

- they appear to be anonymous image models being tested in public comparison settings

- community discussion suggests unusually strong text rendering and world knowledge

- some observers speculate that they may be related to OpenAI

- there is no official confirmation as of April 4, 2026

- there is not yet enough public benchmark evidence for a hard ranking or deployment recommendation

That still makes them interesting. In fact, it makes them more useful as a signal than as a mythology object.

For creators, these models are worth monitoring. For teams, they are worth tracking. For anyone publishing analysis, they are worth discussing carefully.

MaskingTape, PackingTape, and GafferTape FAQ

What are MaskingTape, PackingTape, and GafferTape?

They are anonymous image-model names currently being discussed in Arena-style community testing. As of April 4, 2026, no official public documentation confirms their ownership or final product names.

Are MaskingTape, PackingTape, and GafferTape OpenAI models?

They are widely rumored to be connected to OpenAI, but that has not been officially confirmed. The safest wording is that the connection is community speculation, not verified fact.

Why are people talking about these anonymous image models?

Most discussion focuses on two reported strengths: strong world knowledge and strong text rendering. Both capabilities are highly valuable in practical image-generation workflows.

Is there enough benchmark data to rank them?

Not yet in a rigorous way. Social examples and early arena attention are useful signals, but they do not replace stable, repeated benchmark evaluation.

Should teams switch to these models now?

Most teams should wait for official confirmation, clearer availability, pricing, safety documentation, and more durable benchmark evidence before making workflow changes.